What follows is the English version of an article I was asked to write for Unipress, the magazine of the University of Bern, for a themed issue on “digital realities”. It appears in a German version in this month’s issue.

Computers regulate our lives. We increasingly keep our personal and cultural memories in “the cloud”, on services such as Facebook andnstagram. We rely on algorithms to tell us what we might like to buy, or see. Even our cars can now detect when their drivers are getting tired, and make the inviting suggestion that we pull off the road for a cup of coffee.

But there is a great irony that lies at the heart of the digital age, which is this: the invention of the computer was the final nail in the coffin of the great dream of Leibniz, the seventeenth-century polymath. The development of scientific principles that took place in his era of the Enlightenment gave rise to a widely shared belief that humans were a sort of extraordinarily complex biological mechanism, a rational machine. Leibniz himself had a firm belief that it should be possible to come up with a symbolic logic for all human thought and a calculus to manipulate it. He envisioned that the princes and judges of his future would be able to use this “universal characteristic” to calculate the true and just answer to any question that presented itself, whether in scientific discourse or in a dispute between neighbors. Moreover, he firmly believed that there was nothing that would be impossible to calculate – no Unknowable.

Over the course of next 250 years, a language for symbolic logic, known today as Boolean logic, was developed and proven to be complete. Leibniz’ question was refined: is there a way, in fact, to prove anything at all? To answer any question? More precisely, given a set of starting premises and a proposed conclusion, is there a way to know whether the conclusion can be either reached or disproven from those premises? This challenge, posed by David Hilbert in 1920, became known as the Entscheidungsproblem.

After Kurt Gödel demonstrated the impossibility of answering the question in 1930, Alan Turing in 1936 proved the positive existence of insoluble problems. He did this by imagining a sort of conceptual machine, with which both a set of mathematical operations and its starting input could be encoded, and he showed that there exist combinations of operation and input that would cause the machine to run forever, never finding a solution.

Conceptually, this is a computer! Turing’s thought experiment was meant to prove the existence of unsolvable problems, but he was so taken with the problems that could be solved with his “machine” that he wanted to build a real one. Opportunities presented themselves during and immediately after WWII for Turing to build his machines, specifically Enigma decryption engines, and computers were rapidly developed in the post-war environment even as Turing’s role in conceiving of them was forgotten for decades. And although Turing had definitively proved the existence of the Unknowable, he remained convinced until the end of his life that a Turing machine should be able to solve any problem that a human can solve–that it should be possible to build a machine complex enough to replicate all the functions of the human brain.

Another way to state Turing’s dilemma is: he proved that there exist unsolvable problems. But does human reasoning and intuition have the capacity to solve problems that a machine cannot? Turing did not believe so, and he spent the rest of his life pursuing, in one way or another, a calculating machine complex enough to rival the human brain. And this leads us straight to the root of the insecurity, hostility even, that finds its expression in most developed cultures toward automata and computers in particular. If all human thought can be expressed via symbolic logic, does it mean that humans have no special purpose beyond computers?

Into this minefield comes the discipline known today as the Digital Humanities. The early pioneers of the field, known until the early 2000s as “Humanities Computing”, were not too concerned with the question – computers were useful calculating devices, but they themselves remained firmly in charge of interpretation of the results. But as the technology that the field’s practitioners used developed against a cultural background of increasingly pervasive technological transformation, a cultural clash between the “makers” and the “critics” within Humanities Computing was inevitable.

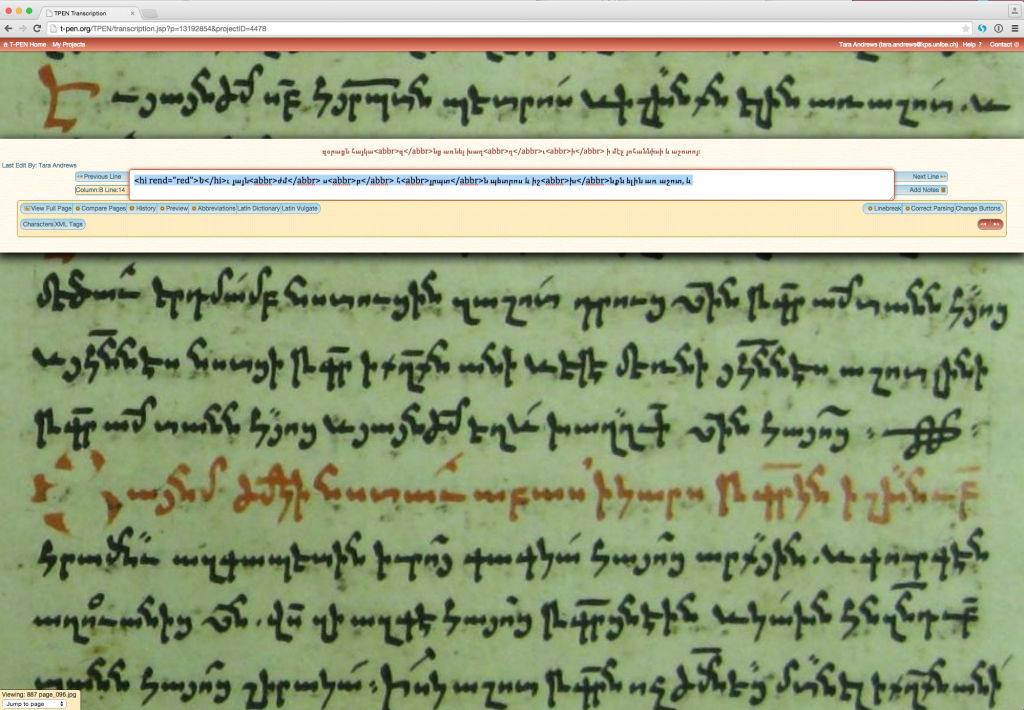

Digital Humanities is, more than usually for the academic disciplines of the humanities, concerned with making things. This is “practice-based” research – the scholar comes up with an idea, writes some code to implement it, decides whether it “works”, and draws conclusions from that. And so Digital Humanities has something of a hacker culture – playful, even arrogant or hubristic sometimes – trying things just to see what happens, building systems to find out whether they work. This is the very opposite of theoretical critique, which has been the cornerstone of what many scholars within the humanities perceive as their specialty. Some of these critics perceive the “hacking” as necessarily being a flight from theory – if Digital Humanities practitioners are making programs, they are not critiquing or theorizing, and their work is thus flawed.

Yet these critics tend to underestimate the critical or theoretical sophistication of those who do computing. Most Digital Humanities scholars are very well aware of the limitations of what we do, and of the fact that our science is provisional and contingent. Nevertheless, we are often guilty of failing to communicate these contingencies when we announce our results, and our critics are just as often guilty of a certain deafness when we do mention them.

A good example of how this dynamic functions can be seen with ‘distant reading’. A scholar named Franco Moretti pointed out in the early 2000s that the literary “canon” – those works that come to mind when you think of, for example, nineteenth-century German literature – is actually very small. It consists of the things you read in school, those works that survived the test of time to be used and quoted and reshaped in later eras. But that’s a very small subset of the works of German literature that was produced in the 19th century! Our “canon” is by its very nature unrepresentative. But it has to be, since a human cannot possibly read everything that was published in 100 years.

So can there be such a thing as reading books on a large scale, with computers? Moretti and others have tried this, and it is called distant reading. Rather than personally absorbing all these works, he has it all digitized and on hand so that comparative statistical analysis can be done, patterns in the canon can be sought against the entire background against which it was written.

As a result we now have two models of ‘reading’. One says that human interpretation should be the starting point in our humanistic investigations; the other says that human interpretation should be the end point, delayed as long as possible while we use machines to identify and highlight patterns. Once that’s done, we can make sense of the patterns.

And so what we digital humanities practitioners, the makers, tend toward is a sort of hybrid model between human interpretation and machine analysis. In literature, this means techniques such as distant reading; in history, it might mean social network analysis of digitized charters or a time-lapse map of trading routes based on shipping logs. The ultimate question that our field faces is this: can we make out of this hybrid, out of this interaction between the digital and the interpretative, something wholly new?

The next great frontier in Digital Humanities will be exactly this: whether we can extend our computational models to handle the ambiguity, uncertainty, and interpretation that is ubiquitous in the humanities. Up to now everything in computer science has been based on 1 and 0, true and false. These two extraordinarily simple building blocks have enabled us to create machines and algorithms of stunning sophistication, but there is no “maybe” in binary logic, no “I believe”. Computer scientists and humanists are working together to try to bridge that gap; to succeed will produce true thinking machines.

A few months after this particular debate, I was invited to join Joris and several other members of the Alfalab project at KNAW in preparing a paper for the ‘Computational Turn’ workshop in early 2010, which was eventually included in a collection that arose from the workshop. In the article we take a look at the processes by which knowledge is formalized in various fields in the humanities, and how the formalization can be resisted by scholars within each field. Among other things we presented a form of this idea for the formalization of historical research. Three years later I am still working on making it happen.

A few months after this particular debate, I was invited to join Joris and several other members of the Alfalab project at KNAW in preparing a paper for the ‘Computational Turn’ workshop in early 2010, which was eventually included in a collection that arose from the workshop. In the article we take a look at the processes by which knowledge is formalized in various fields in the humanities, and how the formalization can be resisted by scholars within each field. Among other things we presented a form of this idea for the formalization of historical research. Three years later I am still working on making it happen.